Best AI Guardrails in 2026: Tools, Architecture, and How to Choose

Most "best AI guardrails" lists treat the problem as a shopping decision: here are ten tools, pick one. That framing misses the point. Guardrails are not a single product. They are a set of decisions about where in your system you check inputs, enforce policies, validate outputs, and observe behavior. The tool you choose matters — but what matters more is whether that tool actually holds up when someone tries to break it.

What guardrails actually are

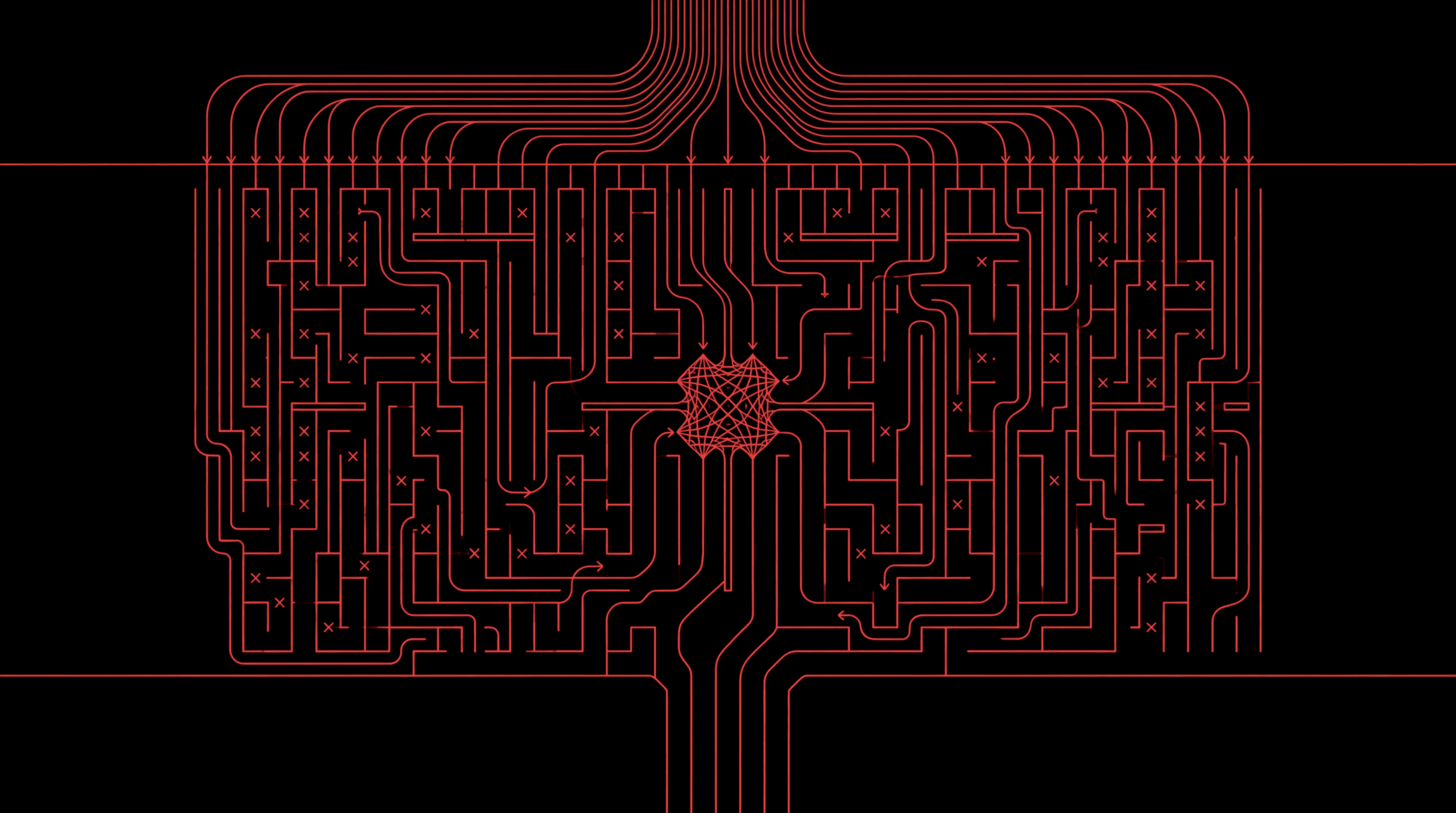

An AI guardrail is any runtime mechanism that sits between an AI model and the rest of the system to constrain what the model can receive, produce, or cause. That definition is deliberately broad. A content filter that blocks toxic output is a guardrail. So is a policy engine that prevents an agent from calling a privileged API. So is a PII scanner that redacts personal data before it reaches a log store.

What unites them is the operating principle: guardrails apply constraints at runtime, not at training time. They do not change what the model knows or how it reasons. They change what the model is allowed to do in a given context, which is a fundamentally different kind of control.

This distinction matters because it shapes how you think about failure. Training-time alignment makes the model less likely to produce harmful outputs. Runtime guardrails make the system less able to act on them. Both are necessary. Neither is sufficient alone.

Key distinction

Alignment makes the model less likely to misbehave. Guardrails make the system less able to act on misbehavior. Production AI needs both.

Why guardrails matter more now

Two years ago, most guardrail conversations were about content filtering: stop the chatbot from saying something toxic. That was a real problem, but it was a narrow one. The model produced text. The text was either safe or unsafe. A classifier could usually tell.

In 2026, the problem has changed shape. Three forces are driving that change.

Models now take actions

The shift from chatbots to agents means LLMs are no longer just producing text. They are calling APIs, querying databases, writing files, sending emails, and triggering workflows. A guardrail failure in 2024 meant a bad response. A guardrail failure in 2026 can mean a bad action: data deleted, money transferred, privileged information forwarded. The stakes are structurally higher because the blast radius has moved from "a user saw something inappropriate" to "the system did something irreversible."

Regulatory pressure is real

The EU AI Act is now in effect. Sector-specific regulations in finance, healthcare, and government procurement increasingly require documented safety controls for AI systems in production. "We fine-tuned the model to be helpful" is no longer a sufficient compliance story. Regulators want to see runtime controls, logging, and evidence that safety mechanisms were in place and tested.

The attack surface is combinatorial

A modern AI system is not just a model. It is a model embedded in a pipeline of retrieval, tool use, memory, multi-turn conversation, and sometimes multi-agent collaboration. Each component adds surface area. Prompt injection through retrieved documents, tool misuse through overpermissioned APIs, memory poisoning through persistent state — these are not hypothetical. They are the failure modes that red teams find routinely. Guardrails in 2026 must cover this full surface, not just the model's chat interface.

Customizability problem

Here is the tradeoff that most guardrail guides do not talk about: customizability and performance are in tension. Every production team eventually discovers this.

Out-of-the-box guardrails ship with a fixed taxonomy — hate speech, violence, sexual content, PII, maybe prompt injection. For a generic chatbot, that is fine. But production systems are not generic. A healthcare copilot needs to block medical misinformation while allowing clinical discussion. A financial assistant needs to enforce regulatory language requirements that no generic model has ever seen. An internal HR agent needs to recognize your specific organizational policies.

The moment you need custom policies, you have two options:

- Prompt a general-purpose LLM to act as a classifier with your policies in the system prompt. This is flexible — you can express any policy in natural language. But it is also slow (seconds, not milliseconds), expensive at scale, and fragile under adversarial pressure because the "guard" itself can be jailbroken.

- Train a dedicated classifier on your specific policies. This is fast and robust — purpose-built models are harder to evade than prompted LLMs. But training requires data, infrastructure, and a pipeline to generate adversarial examples that stress-test the guard against the attacks it will actually face.

Most teams default to option one because it is easier to prototype. Then they discover the latency cost: a GPT-5 call acting as a safety classifier adds 5–11 seconds per check, which is untenable for real-time applications. Even GPT-5-mini at 5.6 seconds is orders of magnitude slower than a purpose-built guard at 29 milliseconds.

The best approach is adversarial training: take the custom policies, generate synthetic training data and red-team examples, and train a dedicated small model that runs at guardrail-grade latency. This is what the strongest guardrail providers now offer as a managed service — converting your bespoke policies into fast, robust classifiers through an adversarial training pipeline.

The customizability spectrum

Ten tools that matter

Below are the guardrail tools worth evaluating in 2026. For each, we describe what it does well, where it falls short, and who should consider it. Where public benchmark data exists, we cite it — because the gap between marketing claims and adversarial-condition performance is often enormous.

GA Guard (General Analysis)

Adversarially trained guardrail family

Strength: State-of-the-art F1 scores across every public benchmark (OpenAI Moderation, WildGuard, HarmBench), with GA Guard Thinking posting 0.983 F1 on HarmBench. The family spans three tiers: GA Guard (default, ~15x faster than cloud providers), GA Guard Lite (~25x faster, viable on edge hardware), and GA Guard Thinking (highest assurance, adversarially hardened). First guards to natively support 256k-token long-context moderation for agent traces and memory-augmented workflows. Trained on red-team attack data and policy-driven synthetic data, not just static benchmarks.

Limitation: Custom policy guardrails tailored to a company's bespoke policies require working with General Analysis directly, though the open-source models cover the standard taxonomy out of the box. GA Guard Thinking trades some latency for its stronger adversarial hardening (0.65s vs 0.029s for the base model).

Best for: Teams that need the strongest publicly benchmarked guardrails with production-grade latency, especially for agentic and long-context workloads. Open-source models on Hugging Face, or managed via SDK/API.

NVIDIA NeMo Guardrails

Open-source framework

Strength: Programmable safety rules via Colang scripting. Fine-grained control over conversational flows, topical constraints, and multi-LLM orchestration. GPU-accelerated with sub-50ms latency. The Nemoguard 8B model is a reasonable open-source option for teams already in the NVIDIA ecosystem.

Limitation: The Nemoguard 8B model scores 0.793 F1 on OpenAI Moderation and 0.875 on HarmBench — respectable but meaningfully behind the state-of-the-art. Colang has a learning curve and a small community. Requires engineering investment to define and maintain policies.

Best for: Teams that want full programmatic control over guardrail conversation flows and are comfortable writing policy-as-code in the NVIDIA stack.

Guardrails AI

Validator framework

Strength: Over 60 pre-built validators through a community hub, a RAIL spec for structured output enforcement, and a composable architecture that lets you chain validators into pipelines. Server mode for production use.

Limitation: Validators are individually useful but can become hard to reason about when stacked. Not primarily a security tool — it is better suited for output quality enforcement (format, schema, factuality) than adversarial defense.

Best for: Teams building structured-output applications that need composable validation: format enforcement, schema compliance, and domain-specific checks.

Lakera Guard

Real-time detection API

Strength: Purpose-built for prompt injection and jailbreak detection. Low-latency REST API with multi-language support and a threat analytics dashboard.

Limitation: Benchmarks show 0.697 F1 on OpenAI Moderation and 0.662 on WildGuard — significantly behind top-performing guards. On adversarial benchmarks, accuracy drops further (0.525 on GA Jailbreak Bench with a 0.825 false-positive rate). Focused on input-side threats only.

Best for: Organizations looking for a simple, API-first prompt injection detector as a fast initial layer.

LLM Guard

Open-source scanner

Strength: Zero-dependency library with input and output scanning, built-in PII anonymization, and support for toxicity, relevance, and injection detection. Easy to self-host and extend.

Limitation: Less sophisticated detection than commercial or adversarially trained alternatives. No managed infrastructure, analytics dashboard, or automatic pattern updates. You maintain it yourself.

Best for: Developers and smaller teams that want a lightweight, self-hosted scanning layer without vendor lock-in or usage-based pricing.

AWS Bedrock Guardrails

Cloud-native guardrail service

Strength: Deeply integrated with the AWS Bedrock model hosting stack. Content filters with configurable severity, denied topic policies, word filters, PII redaction, and contextual grounding checks.

Limitation: Benchmarked at 0.754 F1 on OpenAI Moderation and 0.797 on HarmBench. On adversarial prompts, accuracy collapses: 0.607 F1 on GA Jailbreak Bench, and on long-context inputs it posts a 1.000 false-positive rate (blocking everything). Only works with Bedrock-hosted models.

Best for: Teams already on AWS Bedrock who want basic guardrails tightly coupled to their inference pipeline and can accept the accuracy tradeoffs.

Azure AI Content Safety

Cloud-native content filtering

Strength: Default content filters across hate, self-harm, sexual, and violence categories with configurable severity thresholds. Expanding into agentic controls for tool-call interception.

Limitation: Performs well on narrow content classification but collapses under adversarial conditions: 0.193 F1 on GA Jailbreak Bench with minimal coverage of non-standard attack vectors. On long-context traces, F1 drops to 0.046. Agent-specific controls still in preview.

Best for: Microsoft ecosystem teams who want basic built-in safety with minimal integration effort and can layer stronger guards on top.

Google Vertex AI Model Armor

Cloud-native safety filters

Strength: Native safety filters for Gemini models, built-in grounding with Google Search, and reasonable HarmBench performance (0.945 F1).

Limitation: Tightly coupled to Google Cloud. On adversarial inputs, drops to 0.190 F1 on GA Jailbreak Bench. The slowest cloud option at 0.873s average latency. Safety controls are model-level rather than application-level.

Best for: Teams building on Google Cloud with Gemini models who want native safety and can accept cloud lock-in.

Llama Guard 4 (Meta)

Open-source safety classifier

Strength: Free, open-source 12B parameter model with reasonable baseline performance (0.737 F1 on OpenAI Moderation, 0.961 on HarmBench). Widely adopted as a community standard.

Limitation: Under adversarial pressure, accuracy falls to 0.796 F1 on GA Jailbreak Bench. On long-context traces, it struggles (0.602 F1 with 0.516 FPR). At 0.459s latency, it is significantly slower than purpose-built guard models.

Best for: Teams that want a free, open-source baseline guard and are willing to accept the accuracy and latency tradeoffs versus adversarially trained alternatives.

Galileo Protect

Evaluation + firewall platform

Strength: Combines an LLM firewall with evaluation intelligence. Detects hallucinations, prompt injections, PII leakage, and off-brand responses. Includes observability for monitoring production behavior.

Limitation: Part of a larger evaluation platform — more surface area to learn and manage. Pricing reflects full-platform positioning. Less publicly benchmarked on adversarial robustness than dedicated guard models.

Best for: Teams that want guardrails and evaluation in the same platform, especially those already investing in LLM observability.

Benchmarks

Marketing copy is one thing. Adversarial benchmarks are another. The charts below are from public benchmarking of every major guardrail against three suites: OpenAI Moderation, WildGuard, and HarmBench — plus adversarial evaluations using RL-trained attacker models that generate novel, out-of-distribution jailbreaks.

The results reveal a pattern: most guardrails perform respectably on clean, in-distribution test sets. Under adversarial conditions — the kind that matter in production — the field separates dramatically.

The takeaway is not that one vendor wins. It is that clean-data benchmarks are misleading. A guard that scores well on curated test sets but collapses under adversarial prompts is not production-ready. The gap between in-distribution accuracy and adversarial robustness is the single most important metric when evaluating guardrails, and most comparison guides never measure it.

Three ideas for choosing

The tool list above is deliberately non-hierarchical. There is no single "best" guardrail tool, because the right choice depends on your architecture, your threat model, and where you need coverage. Three principles help cut through the noise.

1. Adversarial robustness matters more than clean-data accuracy

Every guardrail vendor will show you accuracy numbers. The question you should ask is: accuracy against what? A guard that scores 95% on a curated benchmark but drops to 20% under adversarial pressure is not a production tool. It is a demo. The guards that matter are the ones trained on red-team data, hardened through adversarial training pipelines, and tested against the attack techniques that real attackers actually use.

This is why the benchmarking methodology matters as much as the numbers. Static benchmarks with known prompts test recognition. Adversarial benchmarks with RL-generated attacks test resilience. Production systems face the latter.

2. Latency is a constraint, not a feature

A guardrail that adds 5 seconds to every interaction will be disabled within a week. Product teams will not tolerate it, and they should not have to. The strongest guardrails run in tens of milliseconds — fast enough that users never notice them. If your guardrail requires calling a large language model for every check, you have a latency problem that will eventually become a deployment problem.

The tiered approach is the practical answer: fast, lightweight guards for the hot path, and heavier assurance-grade guards for high-risk actions where a few hundred milliseconds is acceptable. The GA Guard family models this pattern explicitly: Lite for speed, default for balance, Thinking for maximum assurance.

3. Guardrails without red teaming are untested guardrails

The most common failure mode in guardrail adoption is not choosing the wrong tool. It is deploying guardrails and never adversarially testing whether they actually hold. A content filter that has never been probed with real injection techniques is security theater. A policy engine that has never been tested against multi-step manipulation is hope, not defense.

This is why guardrails and red teaming are complementary, not alternative, investments. Red teaming tells you where the guardrails fail. Guardrails reduce the blast radius of the failures red teaming finds. Without both, you either do not know what is broken, or you know but cannot stop it.

What to do next

If you are evaluating guardrails for a production AI system, start with the threat model, not the vendor.

- Identify which risks actually apply to your deployment: prompt injection, PII leakage, tool misuse, policy violations, hallucination, or all of them.

- Test candidates under adversarial conditions, not just on clean data. If a vendor cannot show you adversarial benchmark results, that tells you something.

- Measure latency in your actual pipeline. A guardrail that works at 29ms on a benchmark may behave differently in your infrastructure.

- If you need custom policies beyond the standard taxonomy, ask how customization works. If the answer is "prompt an LLM," understand the latency and robustness implications.

- Red team your guardrails after deployment. The threat landscape evolves. Static defenses degrade. Continuous adversarial testing is not optional.

The deepest lesson from the current guardrail landscape is that runtime safety is not a feature you bolt on. It is an architectural property of the system. The tools matter, but whether they have been adversarially hardened matters more.

Related guides

For the threat model behind guardrail design, read the OWASP Top 10 for Agentic AI. For the testing methodology that validates whether guardrails hold, start with What is AI red teaming?. For the conceptual foundation, see What are AI guardrails?. To explore our guardrails in detail, see the GA Guard release post or the runtime security product page.

Related guides

Continue reading

PRIMER

What Are AI Guardrails?

A complete guide to AI guardrails: what they are, the eight main types, how they work architecturally, and how to evaluate them for production LLM and agentic deployments.

Read

PRIMER

MCP Server Security: A Threat Model for Agent Tool Supply Chains

The Model Context Protocol expanded what AI agents can reach, and expanded the attack surface across at least nine distinct vectors. A primary-source threat model for MCP servers, with concrete controls, real CVEs, and the GA Supabase exploit walked end to end.

Read

PRIMER

What Is AI Red Teaming? A Practitioner's Guide

AI red teaming is adversarial testing of AI systems to find exploitable vulnerabilities before attackers do. Learn how it works, key techniques, real exploit examples, and how to implement it.

Read

Newsletter

Get the next research note.

Short updates on agent attacks, red-team methods, runtime guardrails, and production AI security.

Occasional updates. Unsubscribe anytime.