What Are AI Guardrails?

The simplest way to understand AI guardrails is to stop thinking about model alignment and start thinking about deployment control. Alignment tries to make a model behave well from the inside. Guardrails enforce acceptable behavior from the outside, at every boundary where the model touches users, data, tools, and external systems, regardless of what the model internally "wants" to do.

Short answer

AI guardrails are runtime systems that inspect, classify, and gate every interaction with an AI model against a set of security and safety policies. They do not change the model. They control what gets in, what comes out, what tools are called, and what actions are permitted. They generate a trace for every decision.

Why guardrails exist

Large language models are no longer sandboxed chatbots. They read private documents through RAG pipelines. They invoke tools through function-calling and MCP servers. They plan across multiple steps, delegate subtasks to other agents, write and execute code, and sometimes act with real authority over business-critical systems. In that world, an unsafe output is not just embarrassing. It can be an unauthorized database mutation, a leaked credential, or an agent that sends fabricated data to a customer.

Alignment techniques like RLHF and instruction tuning reduce the probability of bad behavior, but they cannot eliminate it. Models can still be jailbroken. They still hallucinate. They still leak data they were given in context. And new attack techniques emerge faster than any retraining cycle can address. The problem gets worse as models gain more capability: a model that can call twenty MCP tools has twenty more ways to cause damage than one that can only generate text.

Guardrails exist because production AI needs a control layer that can be updated, composed, and tested independently of the model. The analogy is straightforward: you do not rely solely on developer education to prevent SQL injection. You deploy a WAF, parameterize queries, and validate inputs. AI guardrails are the equivalent pattern for model deployments: an independent enforcement boundary that operates at runtime, covering not just text but every tool call, agent step, and external action the model can take.

What guardrails do

Guardrails operate at four distinct layers. Most articles only cover the first two. The third and fourth are where the real complexity lives in production agentic systems.

1. Input-side enforcement

Before a user message, retrieved document, or tool output reaches the model, input guards classify it against a set of policies. Is this a prompt injection attempt? Does it contain PII that should be redacted? Is it an off-topic request that the system was not designed to handle? If the input violates policy, the guard can reject it, redact the problematic content, or flag it for human review before the model ever processes it.

Input guards also cover retrieved context in RAG pipelines. A document fetched from a vector store can contain injected instructions just as easily as a user message. Any text that enters the model's context window is an input and should pass through the same guard pipeline.

2. Output-side enforcement

After the model produces a response, output guards classify it before it reaches the user. Does the response contain toxic or harmful content? Does it leak sensitive information? Is the answer grounded in the provided context or is it a hallucination? Does it comply with organizational policy? Output guards apply block-redact-escalate logic on the response side.

3. Action-layer enforcement

This is where guardrails diverge from simple content filtering. In agentic systems, the model does not just produce text. It produces tool calls: structured requests to invoke functions, query databases, call APIs, or interact with MCP servers. An action guard sits between the model's tool-call output and the actual execution of that call.

Before a tool executes, the action guard validates the call against a set of constraints:

- Is this tool on the allowlist for this agent's current task?

- Do the arguments conform to the tool's schema and declared constraints?

- Does the caller have the required permission scope?

- Has the agent already exceeded its action budget for this session?

- What is the blast radius if this call goes wrong? Is it reversible?

For MCP servers specifically, action guards can validate tool names against the declared tool manifest, check that arguments match the MCP tool's input schema, enforce per-server rate limits, and log every invocation with full argument payloads for audit. This is the layer that prevents an agent from calling a filesystem-write tool when it was only authorized to read, or from invoking a database-deletion endpoint because a prompt injection steered its plan.

4. Observability and trace collection

Guardrails are not just gatekeepers. Every guard decision, at every layer, generates structured telemetry: what was evaluated, which guard fired, what the classification score was, what action was taken, and how long it took. This trace data is the foundation of AI observability.

In a well-instrumented system, the observability plane captures:

- Guard decision logs for every input, output, and action gate, including the raw classification scores and the policy rule that determined the outcome.

- Tool and MCP call traces with full argument payloads, execution results, latency, and any guard interventions that modified or blocked the call.

- Agent step logs that record the reasoning chain: what the model planned, which tools it chose, what results it received, and how it decided to proceed. This matters because a guardrail that blocks step 4 of a 7-step chain needs to be understood in the context of steps 1 through 3.

- Latency and cost accounting per guard, per tool call, and per session, so teams can identify performance bottlenecks and optimize guard configurations.

- Compliance audit trails that demonstrate, with logged evidence, that policies were enforced at runtime. This is what regulators and auditors actually require.

Without the observability layer, guardrails are a black box. You know something was blocked but not why, not in what context, and not whether the guard was right. The observability plane turns guardrails from a binary allow/block gate into a continuous security signal that teams can investigate, tune, and learn from.

Guardrail taxonomy

Guardrails are not a single technology. They are a family of classifiers, rule engines, and validation layers, each designed for a specific class of risk. Below are the eight most common categories deployed in production AI systems today, along with why each matters.

Prompt injection detection

Identifies attempts to override system instructions through user input, retrieved documents, or tool outputs so the model follows the developer's intent rather than the attacker's.

Why it matters: Prompt injection is the most broadly exploited LLM vulnerability because every text input is a potential instruction vector. A guardrail that catches injection before the model sees it neutralizes the most common first move in an attack chain.

Jailbreak and refusal-bypass detection

Detects social-engineering strategies, encoding tricks, role-play exploits, and multi-turn manipulation sequences designed to make the model ignore its safety training.

Why it matters: Jailbreaks differ from injection in that the attacker is not injecting new instructions but persuading the model to abandon existing ones. Guards here must generalize beyond known templates because jailbreak techniques evolve continuously.

Topical and off-topic filtering

Restricts the model to a defined domain or set of permitted topics, blocking conversations that drift into areas the deployment was not designed to handle.

Why it matters: An internal HR copilot should not answer questions about weapons. A customer-support agent should not draft legal opinions. Topic guards prevent scope creep that creates both reputational and legal exposure.

PII and sensitive data detection

Scans inputs and outputs for personally identifiable information, credentials, API keys, internal identifiers, and other data that should not cross trust boundaries.

Why it matters: Models can leak training data, repeat user-provided secrets in later turns, or surface PII from retrieved documents. A data guard on both sides of the model is the most direct mitigation for unintended data exposure.

Toxicity and harmful content filtering

Classifies outputs for hate speech, harassment, sexual content, self-harm instructions, violence, and other content categories that violate platform or organizational policy.

Why it matters: Even well-aligned models produce harmful content under adversarial conditions. Content guards are the last line of defense before unsafe text reaches users, and the one most visible to regulators and compliance teams.

Tool-call and MCP action validation

Intercepts tool calls, MCP server invocations, function executions, and agent actions before they run. Validates tool names against an allowlist, checks arguments against schemas, enforces permission scopes, and evaluates blast radius before granting execution.

Why it matters: In agentic systems the risk is not unsafe text but unsafe action. A model that invokes an MCP tool to delete database rows, send an email with fabricated data, or write to a filesystem can cause irreversible damage. Action guards are the only layer that can enforce least-privilege at the point of execution.

Compliance and policy enforcement

Maps model behavior to organizational policy frameworks, regulatory requirements, and industry standards such as the EU AI Act, NIST AI RMF, ISO 42001, and internal acceptable-use policies.

Why it matters: Compliance is increasingly non-negotiable for production AI. Policy guards provide an auditable enforcement point where governance intent is translated into runtime decisions with logged evidence.

Hallucination and groundedness checks

Evaluates whether model outputs are supported by the provided context, retrieved documents, or known facts rather than fabricated or unsupported claims.

Why it matters: Hallucination is the failure mode that most directly erodes user trust. Groundedness guards are especially important in RAG systems where users expect answers to be traceable to source material.

How guardrails work

Most guardrail systems share a common architecture even when the specific guards differ. Understanding this architecture matters because it determines latency, composability, failure handling, and what you can actually observe at runtime.

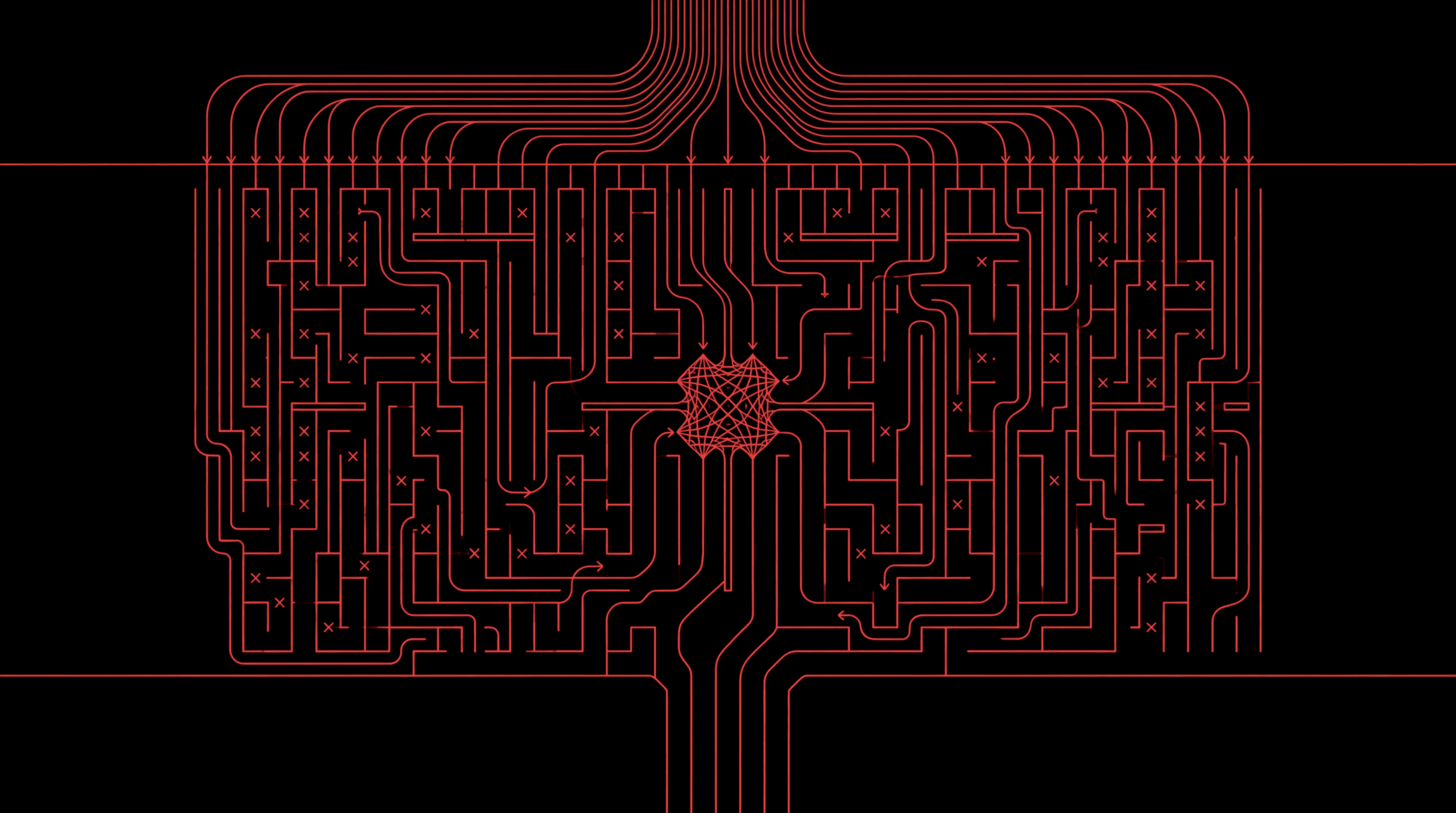

The interception pattern

Guardrails are deployed as middleware in every path between the client, the model, and external systems. Every user message passes through an input guard pipeline. Every model response passes through an output guard pipeline. And every tool call or MCP invocation passes through an action guard pipeline. This is functionally identical to how a reverse proxy or API gateway works in traditional web infrastructure, except the "requests" are natural language and structured tool calls rather than HTTP.

Classifier-based guards

The most common implementation uses a separate, smaller classifier model trained specifically for each guard type. For example, a prompt injection guard might be a fine-tuned transformer that takes the user message as input and outputs a binary classification: injection or benign. These classifiers are fast (often under 10ms per guard), cheap to run, and independently trainable. They can be retrained on new attack data without touching the main LLM.

Rule-based and hybrid guards

Some guard types are better served by deterministic rules. PII detection often uses regex patterns and named-entity recognition. Topic filtering can use keyword lists combined with embedding similarity. The strongest systems combine classifier-based and rule-based guards in a single pipeline, using the right tool for each risk category.

Action-layer interception

When the model emits a tool call (a structured JSON object specifying a function name and arguments), the action guard intercepts it before execution. For MCP servers, this means validating the tool name against the server's declared tool manifest, checking that arguments match the input schema, verifying that the agent's session has the required permission scope, and applying rate limits or budget caps. The guard can approve, deny, or modify the call (for example, stripping a sensitive argument before forwarding).

Action guards are what turn tool access from implicit trust into explicit, auditable validation. Without them, every MCP tool the model can see is a tool the model can invoke, and the only thing preventing misuse is the system prompt, which is the weakest link in the chain.

The observability plane

Every guard decision, at every layer, emits a structured event. These events flow into the observability plane, which aggregates them into traces, dashboards, alerts, and audit logs. A single user session might generate dozens of guard events: input classification, output classification, three tool-call validations, a PII redaction, and a hallucination flag. The observability plane stitches these into a coherent session trace that security teams can investigate end to end.

This is also where cost and latency accounting happens. If an agent calls twelve MCP tools across a five-step plan, the observability plane records the latency of each guard check, the execution time of each tool, and the total cost of the session. Teams can use this data to identify which guards are adding the most latency, which tools are being invoked most frequently, and where the system is spending compute.

Policy engines

Above the individual guards sits a policy engine that defines which guards are active, how they are configured, and what happens when a guard fires. Policies are typically expressed as declarative rules: "if the injection classifier score exceeds 0.85, block the request and log the event" or "if the agent invokes more than three write operations in a single session, require human approval for subsequent writes." This separation between detection (the guard) and decision (the policy) allows teams to tune enforcement without rewriting classifiers.

Parallel execution

Performance-sensitive deployments run multiple guards in parallel rather than sequentially. If the injection guard, PII guard, and topic guard are independent classifiers, they can all evaluate the same input concurrently. The policy engine aggregates their results and makes a single allow/block decision. This parallel pattern is how production guardrails achieve sub-10ms total overhead even with multiple active guards.

Guardrails vs alternatives

Guardrails are not the only way to make AI systems safer. They are one layer in a broader stack, and it is worth understanding what each layer does well and where it falls short.

| Layer | Approach | What it does well | Where it falls short |

|---|---|---|---|

| Training-time | RLHF and fine-tuning | Shifts the model's baseline behavior. Effective at reducing the probability of unsafe outputs across most inputs. | Expensive, slow to update, insufficient against adversarial inputs. Cannot be patched the day a new attack appears. Does nothing to control tool calls or agent actions. |

| Prompt-time | System prompts | Simple to deploy. Can encode application-specific rules quickly. | Brittle under adversarial pressure. Prompt instructions can be overridden, leaked, or ignored. Zero enforcement over tool execution. |

| Training-time | Constitutional AI | Trains the model to self-critique. Improves baseline behavior. | Cannot be updated in production. No external enforcement when self-critique fails. No visibility into tool calls or external actions. |

| Runtime | Guardrails | Independent enforcement at input, output, and action boundaries. Covers text, tool calls, MCP invocations, and agent plans. Composable, independently testable, and updatable without retraining. | Adds runtime latency. Requires its own engineering investment. Must itself be tested against adversarial inputs. |

The strongest AI safety posture combines all of these layers. RLHF and constitutional AI set a good baseline. System prompts encode application-specific rules. And guardrails provide the runtime enforcement that catches what the other layers miss, especially the action layer where models interact with tools, MCP servers, databases, and APIs that the other approaches cannot see.

Failure modes

Guardrails are not infallible. Understanding where they break down is as important as understanding what they do, because over-reliance on guardrails without awareness of their limitations creates a false sense of security.

Adversarial evasion

Guardrail classifiers are themselves machine learning models, which means they have their own adversarial attack surface. Attackers can craft inputs that are malicious to the target LLM but look benign to the guard classifier. This is why guardrail robustness, not just accuracy on clean data, is the metric that matters most. A guard that scores 99% on a benchmark but can be bypassed with simple encoding tricks is not production-ready.

Latency-accuracy tradeoffs

Stronger guards tend to be slower. A large transformer classifier will catch more attacks than a regex rule, but it also adds more latency. Production deployments must balance guard strength against user-facing latency budgets. The best systems offer tiered guard models: a fast, lightweight guard for low-risk paths and a high-assurance guard for critical workflows.

False positives

Overly aggressive guards block legitimate user requests. False positives erode user trust and create pressure to loosen guard thresholds, which in turn increases the attack surface. Precision matters as much as recall: a guardrail that blocks 5% of legitimate traffic will not survive contact with a product team.

Blind spots in the action layer

Many guardrail deployments only cover text inputs and outputs and leave tool calls, MCP interactions, and agent actions completely unguarded. This is the most common and most dangerous gap in practice. If your guardrails inspect the user's message but not the tool call the model generates in response, an attacker who steers the model into calling a dangerous tool has bypassed your entire safety stack. Action guards are not optional for agentic deployments.

Observability gaps

A guardrail that blocks a request but does not log why, in what context, or with what confidence is almost as bad as no guardrail at all. Without trace data, teams cannot investigate false positives, identify emerging attack patterns, measure guard effectiveness, or demonstrate compliance to auditors. The observability layer is not a reporting feature. It is a security requirement.

Static defenses against adaptive attackers

A guardrail deployed once and never updated will degrade over time as attackers adapt. Continuous red teaming, guard retraining, and policy updates are necessary to keep guardrails effective against an evolving threat landscape.

Evaluating guardrails

Whether you are building or buying guardrails, these are the dimensions that separate production-grade systems from demo projects.

- Adversarial robustness. How does the guard perform against inputs specifically designed to evade it? Clean-data accuracy is a floor, not a ceiling. Test against state-of-the-art jailbreaks, encoding tricks, and multi-turn evasion chains.

- Precision and recall balance. High recall (catching threats) is useless if it comes with precision so low that legitimate requests are constantly blocked. The best guards achieve high recall at production-acceptable precision.

- Latency overhead. What is the added latency per guard, and can guards run in parallel? Production systems should target single-digit milliseconds per guard and sub-20ms total pipeline overhead.

- Coverage breadth. Does the system cover injection, jailbreak, PII, toxicity, hallucination, topic, compliance, and action validation, or only a subset? Gaps in coverage are gaps in security. Pay special attention to whether the system covers tool calls and MCP interactions, not just text.

- Action-layer support. Can the system intercept and validate tool calls, function invocations, and MCP server interactions? Can it enforce per-tool permissions, argument schemas, rate limits, and blast-radius constraints? If not, it is a content filter, not a guardrail system for agentic AI.

- Observability depth. Does the system emit structured traces for every guard decision, tool call, and agent step? Can you reconstruct a full session timeline from the trace data? Can you alert on anomalous patterns and export audit evidence for compliance?

- Policy expressiveness. Can you define custom policies beyond the built-in categories? Can you express organization-specific rules, risk thresholds, per-tool permissions, and escalation workflows?

- Update velocity. How quickly can guard models be retrained or policies updated when a new attack technique or compliance requirement appears? Days matter.

Where guardrails fit

Guardrails are one layer in a defense-in-depth approach to AI security. They work best when combined with the other layers in a mature AI security program.

Asset discovery

Before you can guard an AI system, you need to know it exists. Asset discovery inventories every model, agent, MCP server, tool integration, and data source across the organization. You cannot apply guardrails to systems you have not catalogued, and you cannot write action-layer policies for tools you do not know about.

Red teaming

Automated and manual red teaming probes AI systems under adversarial conditions to discover failure modes before attackers do. Red teaming generates the evidence that informs which guardrails to deploy, how to configure them, and where coverage gaps remain. It also tests whether existing guardrails can actually be bypassed, including whether action guards hold when an attacker steers the model through multi-step tool-call chains.

Runtime guardrails

Guardrails enforce policy at every boundary: inputs, outputs, tool calls, MCP invocations, and agent actions. They are the real-time control layer that blocks, redacts, or escalates based on the policies informed by discovery and red teaming.

Observability and continuous monitoring

Guardrail decisions, tool-call traces, agent step logs, and cost-latency metrics flow into the observability layer, which aggregates them into dashboards, alerts, and audit trails. Monitoring closes the loop: it surfaces emerging attack patterns, tracks guard effectiveness over time, identifies which MCP tools are being invoked most frequently, flags anomalous agent behavior, and provides the evidence that regulators and auditors require.

The key insight is that guardrails are most effective when they are not treated as a standalone content filter but as part of an integrated security and observability program. Discovery tells you what to guard. Red teaming tells you how it breaks. Guardrails enforce the rules. Observability tells you whether the rules are working, in real time, with full trace evidence across every layer, from the first user message to the last tool call.

Related guides

For deeper dives into the topics referenced here, see What is AI red teaming?, Best AI guardrails in 2026, and the OWASP Top 10 for Agentic AI. To explore our runtime guardrails directly, see the runtime security product page.

Related guides

Continue reading

PLAYBOOK

Best AI Guardrails in 2026: Tools, Architecture, and How to Choose

An analytical guide to the AI guardrails landscape: the architecture behind runtime safety, the ten tools that matter, what adversarial benchmarks actually show, and the decisions that determine whether guardrails hold under pressure.

Read

PRIMER

MCP Server Security: A Threat Model for Agent Tool Supply Chains

The Model Context Protocol expanded what AI agents can reach, and expanded the attack surface across at least nine distinct vectors. A primary-source threat model for MCP servers, with concrete controls, real CVEs, and the GA Supabase exploit walked end to end.

Read

PLAYBOOK

How to Detect Shadow AI

A practical guide to detecting shadow AI across browser extensions, SWG endpoint agents, network telemetry, SaaS logs, endpoint agents, AI gateways, and MCP gateways.

Read

Newsletter

Get the next research note.

Short updates on agent attacks, red-team methods, runtime guardrails, and production AI security.

Occasional updates. Unsubscribe anytime.