What Is AI Red Teaming? A Practitioner's Guide

What is AI red teaming?

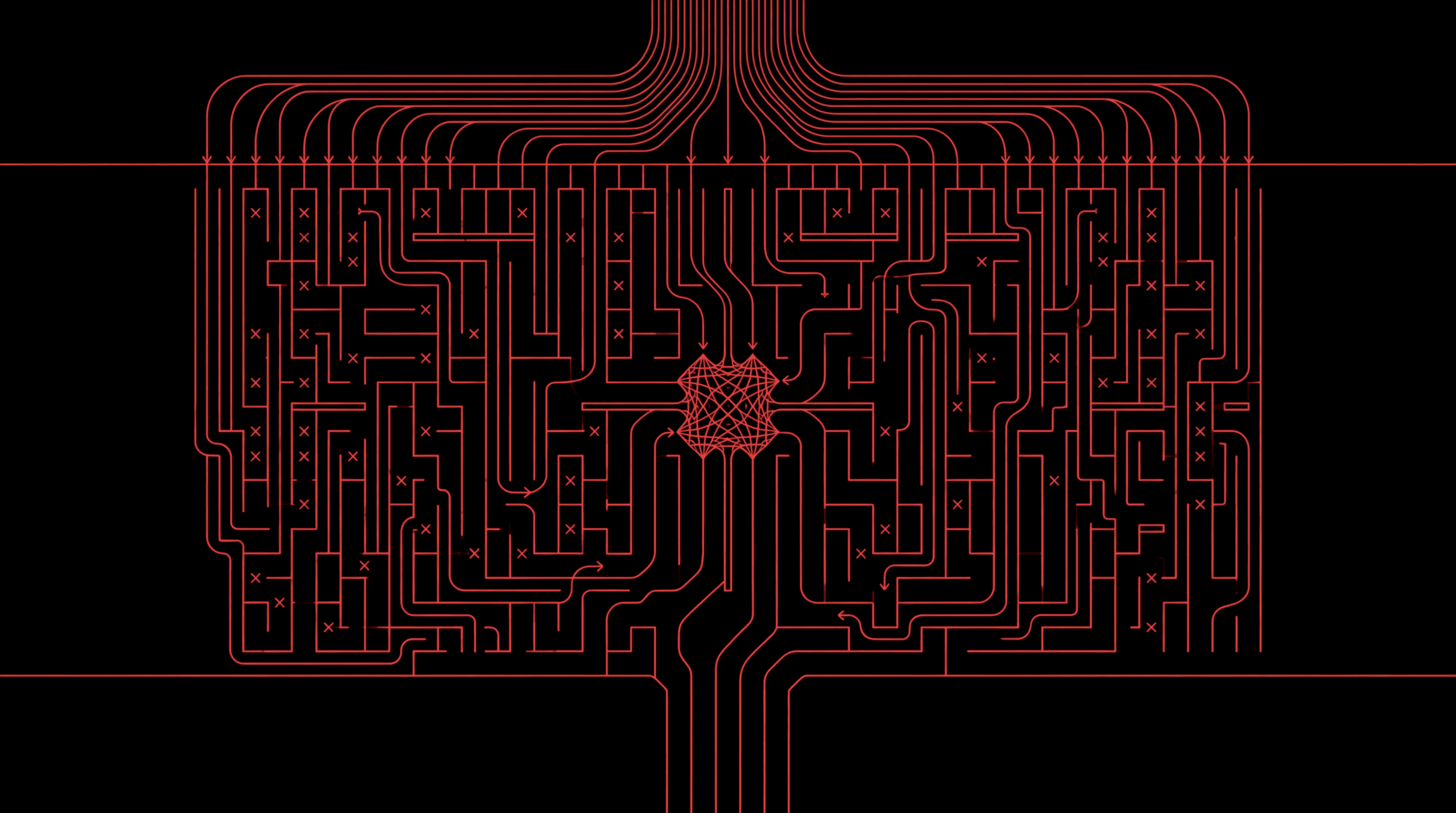

AI red teaming is a structured process of simulating adversarial attacks against AI systems to discover exploitable vulnerabilities before attackers do. Unlike standard QA, benchmarking, or RLHF-based evaluation, red teaming is adversarial by design: the goal is to find ways to make the system fail, not to measure how often it succeeds.

Traditional cybersecurity red teaming targets infrastructure — networks, servers, access controls. AI red teaming targets a fundamentally different attack surface: model behavior, tool-call chains, data pipelines, and the full composition of components in an agentic system. The distinction matters because AI systems are probabilistic, their outputs change with every prompt and context window, and the failure modes are behavioral rather than architectural.

The most important framing: red teaming is adversarial search, not evaluation. You are not scoring the model on a fixed benchmark. You are actively searching for exploitable behavior across prompts, tools, permissions, retrieval flows, and agent actions. A model can score perfectly on safety evaluations and still be vulnerable to a well-crafted multi-step attack that exploits the gap between the model, its tools, and its operating context.

This applies to any AI system with real-world authority: LLMs powering customer service, copilots generating code, agents executing financial transactions, and any system that interacts with tools, APIs, or databases through protocols like the Model Context Protocol (MCP).

Why AI red teaming matters

AI systems have moved from research demos to production infrastructure. They handle customer data, execute transactions, call external tools, and make decisions with real business consequences. The risk profile has fundamentally changed.

The critical shift is from model-level to system-level risk. Early AI safety work focused on what a chatbot might say. Today's agents don't just generate text — they take actions. They invoke tools through function calling and MCP servers. They query databases, send emails, process payments, and modify production systems. A jailbreak that produces offensive text is one category of risk. A jailbreak that triggers an unauthorized financial transaction is a different category entirely.

Simon Willison describes this as the lethal trifecta: when an AI agent has access to private data, is exposed to untrusted content, and can communicate externally, the conditions for catastrophic exploitation are met. LLMs follow instructions in content indiscriminately — they cannot reliably distinguish between the developer's system prompt and a malicious instruction embedded in a retrieved document. As Willison has also documented, MCP integrations introduce additional attack surfaces including tool poisoning, tool shadowing, and rug pulls that traditional security testing will never surface.

These are not theoretical risks. In 2026 alone, a malicious GitHub issue title compromised 4,000 developer machines through a code assistant's triage bot, and researchers demonstrated private repository exfiltration through GitHub's official MCP server. Static evaluations miss these interactive, multi-step failures. A safety benchmark tests the model against a fixed prompt set. An adversarial red team tests what happens when an attacker can steer the model across multiple turns, inject instructions through retrieved content, or exploit an over-privileged tool. These are the failures that actually matter in production.

Regulatory pressure is accelerating this shift. The US Executive Order on AI, the EU AI Act, the NIST AI Risk Management Framework, the OWASP Top 10 for LLMs, and the OWASP Top 10 for Agentic AI all point toward adversarial testing as either a requirement or a strong recommendation. The agentic AI list is particularly relevant — it identifies risks like agent goal hijacking, tool misuse, privilege abuse, and cascading failures that only surface through adversarial testing of the full system, not model-level benchmarks. See our detailed analysis of the OWASP Agentic AI Top 10. The direction is clear: organizations deploying AI agents in production will need to demonstrate that they have tested them adversarially.

The real-world evidence is already here. In our research, we found that 50+ customer service agents could be manipulated into fabricating benefits worth millions — not through sophisticated zero-day exploits, but through adversarial steering that existing safety measures failed to catch.

What it tests

A mature AI red teaming program covers both direct model abuse and system-level exploitability. The attack surface divides into two tiers, and most organizations only test the first.

Model-level testing

Model-level testing targets the LLM itself — its ability to follow instructions, refuse unsafe requests, and maintain policy alignment under adversarial pressure.

- Jailbreaks and refusal bypasses — social engineering, encoding tricks, role-play exploits, and multi-turn manipulation sequences that convince the model to ignore safety training. Techniques like GCG, TAP, and AutoDAN represent the current state of the art. See our Jailbreak Cookbook for a practitioner-oriented overview.

- Prompt injection — both direct injection through user input and indirect injection through retrieved context, tool outputs, or any text that enters the model's context window.

- Hallucination under adversarial pressure — crafted prompts that maximize confabulation rates in specific domains, producing authoritative-sounding but fabricated information.

- Policy evasion and safety filter circumvention — systematic testing of content policies, refusal mechanisms, and output filters to find gaps in coverage.

System-level testing

System-level testing is where AI red teaming diverges most sharply from traditional evaluation — and where the highest-impact vulnerabilities live.

- Tool misuse and over-permissioned agents — testing whether an agent can be steered into invoking tools it shouldn't have access to, or using authorized tools in unauthorized ways.

- Multi-step exploit chains — attacks that span memory, retrieval, planning, and tool calls, where no single step looks malicious but the chain produces a harmful outcome.

- MCP and integration-layer vulnerabilities — exploiting trust boundaries between the model and external systems connected through MCP servers, function calling, or API integrations.

- Data exfiltration through retrieved context — using prompt injection to trick the model into leaking sensitive data from RAG pipelines, databases, or internal documents through its responses.

- Cross-system attacks — exploits that chain multiple integrations together, such as forging conversation metadata to trigger tool invocations across messaging platforms and payment systems.

| Model-level attacks | System-level attacks |

|---|---|

| Jailbreaks and refusal bypasses | Tool misuse and over-permissioned agents |

| Direct prompt injection | Multi-step exploit chains across memory and tools |

| Hallucination and confabulation | MCP and integration-layer exploitation |

| Safety filter circumvention | Data exfiltration through RAG pipelines |

| Policy evasion | Cross-system attack chains |

AI vs traditional

AI red teaming and traditional cybersecurity red teaming share a mindset — think like an attacker, find the weaknesses before they do — but they target fundamentally different systems and require different expertise.

| Aspect | Traditional red teaming | AI red teaming |

|---|---|---|

| Target | Networks, servers, access controls | Models, agents, tool chains, data pipelines |

| Attack surface | Static infrastructure | Dynamic, probabilistic, context-dependent |

| Techniques | Pen testing, social engineering, physical intrusion | Adversarial prompts, jailbreaks, tool abuse, multi-step chains |

| Failure mode | Unauthorized access | Unsafe outputs, unintended actions, data leakage, policy violations |

| Reproducibility | Generally reproducible | Variable — same prompt can produce different outputs |

| Testing cadence | Periodic (annual/quarterly) | Continuous — retest after every model update, prompt change, or tool addition |

| Team composition | Security engineers | ML engineers + security + domain experts |

The core reason this distinction matters: AI systems are probabilistic. Their attack surfaces change with every deployment update — a new model version, a revised system prompt, or an additional tool integration can introduce entirely new vulnerability classes. Traditional penetration testing doesn't cover behavioral failures, and annual testing cadences are insufficient when the system under test changes weekly. AI red teaming must be continuous, adaptive, and aware of the full system — not just the model in isolation.

Microsoft's AI Red Team reinforced this in their report on lessons from red teaming 100 generative AI products: "AI red teaming is not safety benchmarking" and "the work of securing AI systems will never be complete." Their experience confirms that red teaming must focus on probing end-to-end systems rather than individual models — the same conclusion that drives our approach.

How automated red teaming works

Automated AI red teaming is best understood as adversarial search over an AI system, not as a prettier name for evaluation. The objective is to discover where the system can be manipulated, not merely to measure whether it performs well on a fixed prompt set. Here is how it works in practice.

The process is six phases, each of which loops continuously after every system change.

1. Scope the system

Map the agent's tools, permissions, retrieval sources, and downstream actions. Red teaming an isolated chatbot is fundamentally different from red teaming an agent with database access, payment APIs, and MCP server integrations. The scope determines what attack strategies are relevant and what constitutes a meaningful finding. See our technical documentation for details on system scoping.

2. Model the attacker

Define attacker profiles: an external user with no special access, a compromised data source injecting instructions through retrieved content, or an indirect injection via a tool output. Different threat models require different attack strategies, and the most dangerous vulnerabilities often come from attacker profiles that the development team did not consider.

Tip: Don't only model the external attacker. Some of the most impactful vulnerabilities come from compromised data sources and indirect injection — where the attacker never directly interacts with the model but poisons the content it retrieves or the tools it invokes.

3. Generate adversarial campaigns

Use trained attacker models — not random fuzzing — to generate context-aware attack sequences. General Analysis uses reinforcement-learning-trained attacker models that adapt based on target responses, learning which attack strategies work against specific system configurations. This produces attacks that are far more effective than static prompt sets or template-based fuzzing.

4. Execute and observe

Run attacks, capture full transcripts including tool calls and system responses. Log everything — failed attacks are data too. Every interaction generates signal about the system's defenses, and failed attacks often reveal the boundary conditions of a vulnerability that a slight variation could exploit.

Tip: Capture tool-call traces, not just text responses. In agentic systems, the most dangerous failures happen in what the model does, not what it says. A response that looks safe in text may have triggered an unauthorized database query or API call.

5. Analyze and triage

Prioritize findings by exploitability and business impact, not just novelty. A jailbreak that produces offensive text is different from one that triggers an unauthorized financial transaction. Triage should account for the full blast radius: what data was accessed, what tools were invoked, what downstream systems were affected, and how easily the attack could be reproduced.

6. Remediate and retest

Feed findings into prompt hardening, permission tightening, and guardrail deployment. Then retest to confirm fixes and catch regressions. Every remediation can introduce new vulnerabilities or shift the attack surface — retesting is not optional.

The combination of automated and manual methods is the consensus among leading AI labs. OpenAI uses external red teams alongside automated adversarial generation for every frontier model release. Anthropic's Responsible Scaling Policy mandates structured adversarial testing at each capability threshold. The takeaway: if the organizations building the most capable models treat continuous red teaming as non-negotiable, organizations deploying those models in production should do the same.

Tip: Build red teaming into CI/CD. Every model update, prompt change, or tool addition should trigger an automated red teaming campaign before reaching production. One-time pre-launch testing catches a fraction of the vulnerabilities that continuous testing reveals.

Real exploits

Theory matters less than evidence. These are real vulnerabilities discovered through our red teaming research — not hypothetical risks, but demonstrated exploits against production-grade AI systems.

Customer service agents offering fabricated benefits

We tested 55 real-world customer service agents using an RL-trained attacker model. The attacker manipulated agents into offering unauthorized benefits — including fabricated refunds, free upgrades, and policy exceptions — exceeding $10M in total fabricated value. This demonstrates tool-call exploitation and policy override through adversarial steering at scale.

Claude + iMessage + Stripe coupon minting

We discovered a metadata-spoofing vulnerability in Claude's iMessage integration. An attacker could forge conversation history to trigger MCP tool invocations — resulting in unlimited Stripe coupon creation without user awareness. This demonstrates cross-system exploit chains and MCP security gaps that no model-level evaluation would catch.

Supabase MCP data exfiltration

Through prompt injection via customer support messages, we tricked an LLM assistant into exfiltrating database tables through its MCP connection to Supabase. The attack demonstrated indirect prompt injection leading to data leakage through tool integrations — a vulnerability class that affects any agent with database access.

LLM hallucinations in legal AI

We generated 50,000+ adversarial prompts targeting GPT-4o in the legal domain, producing hallucination rates exceeding 35%. The model fabricated case citations, misrepresented legal precedent, and generated authoritative-sounding analysis with no factual basis. This demonstrates domain-specific risk and the gap between general evaluation and targeted adversarial testing.

Implementation guide

Whether you are building an internal red teaming capability or evaluating external providers, these are the steps that separate rigorous programs from checkbox exercises.

Step 1: Inventory your AI assets

Know what models, agents, tools, and data flows exist before you test them. You cannot red team what you haven't mapped. This includes every model deployment, every tool integration, every MCP server connection, every RAG pipeline, and every downstream system that an agent can affect. Many organizations discover during this step that they have more AI systems in production than they realized.

Step 2: Define threat models and scope

Who is the attacker? What can they access? Are you testing the model, the application, or the full system? Narrow scope produces clearer results. Use frameworks like MITRE ATLAS to structure your threat model around known AI attack techniques, and prioritize the attack surfaces with the highest business impact.

Tip: Start with the systems that have the most authority. An agent that can read a knowledge base is lower risk than one that can modify customer records or process payments. Scope your first red team around the systems where a successful attack has real business consequences.

Step 3: Assemble the right team

AI red teaming requires a mix of ML engineers who understand model behavior, security professionals who think like attackers, and domain experts who can assess whether a model's output is actually correct in context. Automated tools need human judgment for the hardest edge cases and for interpreting findings in business terms.

Step 4: Run adversarial campaigns

Combine automated attack generation with manual deep-dive testing. Start with known attack patterns — jailbreaks, prompt injection, tool abuse — then explore novel chains that are specific to your system's architecture. Log every interaction, including failed attacks, tool-call traces, and system responses.

Tip: Test the full chain, not just the model. If your agent can call ten tools, your red team should test what happens when the attacker steers the model through multi-step tool-call sequences — not just whether a single prompt produces an unsafe response.

Step 5: Triage by business impact

Not all vulnerabilities are equal. An offensive text generation finding is lower severity than an unauthorized financial transaction or a data exfiltration through a tool integration. Triage by exploitability, blast radius, and real-world business impact — not by how clever the attack looks.

Step 6: Remediate and deploy guardrails

Fix root causes through prompt hardening, permission tightening, and architectural changes. Deploy runtime guardrails as a safety net for the vulnerability classes that cannot be eliminated at the source. See our guide to the best AI guardrails for an evaluation framework.

Step 7: Retest continuously

Every model update, prompt change, or tool addition can introduce regressions. Build red teaming into CI/CD, not just pre-launch. The goal is a continuous security signal, not a point-in-time report. Organizations that treat red teaming as a one-off exercise before launch will miss the vulnerabilities introduced by the inevitable changes that follow.

Tools and frameworks

The AI red teaming tooling landscape is maturing rapidly. Here is an honest overview of the major options, their strengths, and where each fits.

Open-source frameworks

- Microsoft PyRIT — Python-based framework for generative AI red teaming. Supports multi-turn attack strategies (Crescendo, TAP, Skeleton Key), multiple target platforms, and built-in scoring. Strong for automated red teaming against model endpoints.

- Promptfoo — Developer-friendly LLM testing and red teaming framework. Scans for 50+ vulnerability types including jailbreaks, prompt injection, and PII leaks. Good for integrating red teaming into CI/CD workflows.

- NVIDIA Garak — LLM vulnerability scanner that probes for hallucination, data leakage, prompt injection, toxicity, and jailbreaks. Supports multiple LLM platforms.

- Published attack techniques — The research community has published techniques like GCG, TAP, Crescendo, and AutoDAN that can be implemented independently. See our Jailbreak Cookbook and Redact and Recover for practitioner-oriented breakdowns.

Commercial platforms

The commercial landscape is evolving quickly. Most platforms specialize in a particular slice of the problem:

- Mindgard — Focuses on continuous automated AI risk assessment. Strongest on model-level vulnerability scanning and compliance-oriented reporting. Useful for teams that need repeatable assessments mapped to regulatory frameworks.

- HiddenLayer — Specializes in AI threat detection and model supply chain security. Their focus is more on protecting model artifacts (weights, pipelines) from tampering and adversarial ML attacks than on prompt-level or agentic testing.

- Lakera — Primarily a prompt security platform. Strong on real-time prompt injection detection and content filtering. More of a guardrail than a red teaming tool, but useful as a defensive layer that red teaming findings can inform.

- General Analysis — Built for system-level testing of agentic AI. Differentiators include tool graph mapping, RL-trained attacker models that adapt to target responses, multi-step exploit chain detection, and testing that covers the full agent system — tools, permissions, and integrations — not just the model endpoint. See our technical docs and benchmarks.

How to choose

Open-source frameworks work well for model-level testing — probing a single model endpoint for jailbreaks, injection, and content policy violations. Commercial platforms add the most value for system-level testing of agents with tool access, real permissions, and multi-step workflows where the attack surface extends far beyond the model itself.

The key question: are you testing a model, or are you testing a system? If your AI deployment involves tool calling, MCP integrations, database access, or any form of agent autonomy, you need tools that can map and test the full system — not just the language model at the center.

Regulations

Adversarial testing is shifting from best practice to regulatory expectation. Multiple frameworks now reference or require some form of structured adversarial testing for AI systems, and the trend is accelerating.

| Framework | Red teaming requirement | Key detail |

|---|---|---|

| US Executive Order on AI (Oct 2023) | Red teaming of dual-use foundation models | Sections 4.1, 10.1 |

| EU AI Act (2024) | Adversarial testing for high-risk AI | Pre-market requirement |

| NIST AI RMF | Adversarial testing under resilience guidance | MAP and MEASURE functions |

| OWASP Top 10 for LLMs | Red teaming as mitigation for all 10 risks | Covers prompt injection, data leakage, excessive agency |

| OWASP Top 10 for Agentic AI (2026) | Agent-specific adversarial testing | See our analysis |

| ISO/IEC 42001 | AI management system with risk assessment | Adversarial testing implied under risk management |

| MITRE ATLAS | Adversarial threat landscape for AI systems | Attack techniques and mitigations catalog |

The direction is clear — adversarial testing is shifting from best practice to regulatory expectation. Organizations building AI agents should treat red teaming as a compliance requirement, not an optional extra. The regulatory landscape will only get stricter as AI systems gain more autonomy and access to more critical business functions.

AI red teaming FAQ

Six common questions about AI red teaming, evaluation, automation, and how it differs from traditional penetration testing.

Continue reading

Explore our automated red teaming product, the runtime guardrails guide, the best AI guardrails comparison, the OWASP Top 10 for agentic AI, and our latest research and benchmarks.

Related guides

Continue reading

FRAMEWORK

OWASP Top 10 for Agentic AI: What Matters Most?

An analytical guide to the OWASP Top 10 for Agentic Applications 2026: what the ten risks are, how they relate to each other, and what they imply for builders of agentic systems.

Read

PRIMER

MCP Server Security: A Threat Model for Agent Tool Supply Chains

The Model Context Protocol expanded what AI agents can reach, and expanded the attack surface across at least nine distinct vectors. A primary-source threat model for MCP servers, with concrete controls, real CVEs, and the GA Supabase exploit walked end to end.

Read

PLAYBOOK

Best AI Guardrails in 2026: Tools, Architecture, and How to Choose

An analytical guide to the AI guardrails landscape: the architecture behind runtime safety, the ten tools that matter, what adversarial benchmarks actually show, and the decisions that determine whether guardrails hold under pressure.

Read

Newsletter

Get the next research note.

Short updates on agent attacks, red-team methods, runtime guardrails, and production AI security.

Occasional updates. Unsubscribe anytime.